AI Sentiment Analysis for Patient Outcomes

AI sentiment analysis is transforming behavioral health by analyzing emotions in text and speech to predict patient outcomes.

It helps clinicians detect mental health risks, monitor treatment progress, and personalize care.

For example, AI models can predict depression changes weeks in advance or assess suicide risk with high accuracy.

By integrating tools like BERT and RoBERTa, healthcare providers can gain deeper insights from therapy transcripts, patient communications, and clinical notes.

Key points:

What it does: Converts emotions in unstructured data into actionable insights.

Why it matters: Identifies mental health markers that traditional tools miss, enabling earlier interventions.

How it works: Uses advanced NLP models to score sentiment and predict outcomes.

Applications: Improves patient-reported outcomes, supports personalized treatment, and enhances care quality.

This technology is already reducing hospital readmissions, improving diagnostic accuracy, and saving costs in healthcare systems like the University of Wisconsin–Madison. Tools like Opus Behavioral Health EHR integrate AI sentiment analysis into workflows, making it easier for clinicians to respond to patient needs in real time.

How AI Sentiment Analysis Works

Technologies Behind Sentiment Analysis

AI sentiment analysis is built on Natural Language Processing (NLP), which transforms unstructured clinical text into measurable data. Modern transformer models, like BERT, improve the ability to understand context. Key technologies include word embeddings - algorithms such as word2vec and GloVe - that convert words into numeric vectors, making them easier to analyze mathematically. These vectors are then classified using machine learning algorithms like Support Vector Machines, Naive Bayes, and Decision Trees to determine sentiment polarities (positive, negative, or neutral). For instance, in psychotherapy conversations, BERT achieved a kappa score of 0.48, nearly double the 0.25 score of traditional dictionary-based models like LIWC [8].

In healthcare, sentiment scoring often requires adjustments for domain-specific contexts. For example, a January 2023 study led by Danne C. Elbers at the Department of Veterans Affairs recalibrated sentiment scores for 200 clinical terms. After analyzing 3.5 million notes from 10,000 lung cancer patients diagnosed between 2017 and 2019, the team found that the word "positive" had a sentiment score drop from 7.8 to 5.6. This reflected its clinical use to indicate disease presence rather than a favorable outcome [7].

Data Sources in Behavioral Health

AI leverages a variety of data sources to perform sentiment analysis in behavioral health. Therapy session transcripts, such as those from Motivational Interviewing, provide valuable linguistic data. Additional sources include patient-reported outcome measures and secure messaging platforms, which capture written expressions of mood and emotional well-being. Clinical notes, like discharge summaries and progress reports, offer insights from healthcare providers.

Audio recordings add another layer, with tools like Praat and Kaldi analyzing speech patterns, pitch, and frequency to complement text-based data. However, research shows that text-based features generally have a greater impact on accuracy in mental health applications than acoustic markers. By integrating with Electronic Health Records (EHR) and Patient Relationship Management platforms, AI systems can process this data in the background. As Nancy McGee from IQVIA describes it, these systems act like an "emotional detector", continuously monitoring patient sentiment.

From Data to Insights

Once the data is collected, AI systems follow a structured process to extract meaningful insights. First, they capture and preprocess text or audio input. Then, they create linguistic representations - using techniques like embeddings or n-grams - that generate numerical patterns for analysis.

The AI assigns numerical sentiment scores to emotional states. For instance, a 2025 study reported that anxiety-related patient communications produced fear scores of 0.974, while depression-related queries resulted in sadness scores of 0.686 [10]. These scores are displayed on clinical dashboards with real-time visualizations, allowing providers to monitor emotional changes during treatment.

In November 2025, Dr. Jin Ge from the University of California, San Francisco, demonstrated how aggregating sentiment from multiple providers' notes could improve diagnostic accuracy for hepatorenal syndrome patients. This "wisdom of the crowd" approach outperformed traditional lab-based methods [12].

"Using the 'wisdom of the crowd' doesn't just predict outcomes, it offers a directional insight into what the clinical care team collectively thinks about a patient's condition."- Jin Ge, MD, MBA, Assistant Professor of Medicine, UCSF [12]

NLP Insider LIVE: Sentiment Analysis in Patient Experience Data

Sentiment Analysis and Patient-Reported Outcomes

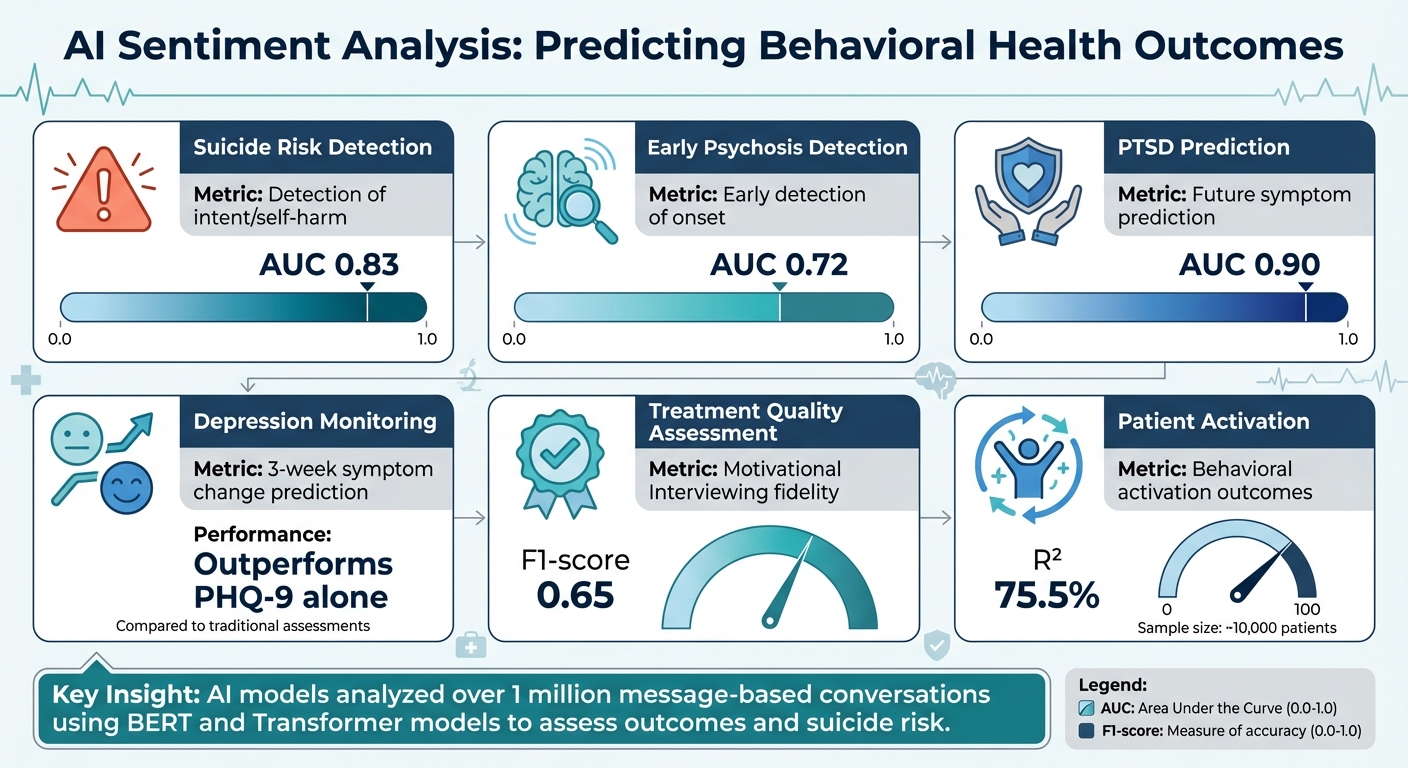

AI Sentiment Analysis Performance Metrics in Behavioral Health Outcomes

Adding Depth to PRO Measures

Standard tools for patient-reported outcomes (PRO), like the PHQ-9 and GAD-7, are great at measuring symptom severity but often overlook the subtleties of emotional expression. This is where AI-driven sentiment analysis steps in, adding a layer of depth by analyzing free-text responses to open-ended questions. These responses can reveal emotional nuances that traditional measures might miss.

Studies have shown that AI can evaluate sentiment in short written responses and even predict changes in depression scores weeks in advance. This remains true even when accounting for initial depression levels and current mood - something traditional word-count software can’t achieve [5]. Prompts about everyday topics like sleep, motivation, or daily routines allow patients to express themselves naturally. AI then identifies subtle emotional cues to help forecast whether symptoms are likely to improve or worsen.

This enriched data provides a more comprehensive view of patient mental health, which ties directly to various behavioral health outcomes.

Behavioral Health Outcomes Affected

The insights gained from sentiment analysis don’t just enhance PRO measures - they also have a direct impact on clinical outcomes. From identifying risks to monitoring treatment quality, AI-powered sentiment analysis is making waves in behavioral health care. For example, research shows that:

AI models can assess suicide risk with an AUC of 0.83.

Early psychosis onset can be detected with an AUC of 0.72.

Predictions for future PTSD symptoms reach an AUC of 0.90 [6].

Additionally, natural language processing (NLP) tools analyzing therapy transcripts have achieved an F1-score of 0.65 when evaluating adherence to evidence-based approaches like Cognitive Behavioral Therapy or Motivational Interviewing [6]. Large-scale studies, involving over a million message-based conversations, have used Transformer-based models like BERT to estimate outcomes and assess suicide risk [1].

|

Outcome Category |

Specific Metric |

AI Performance |

|---|---|---|

|

Suicide Risk |

Detection of intent/self-harm |

0.83 AUC [6] |

|

Psychosis |

Early detection of onset |

0.72 AUC [6] |

|

PTSD |

Prediction of future symptoms |

0.90 AUC [6] |

|

Depression |

3-week symptom change prediction |

Outperforms PHQ-9 alone [5] |

|

Treatment Quality |

Motivational Interviewing fidelity |

0.65 F1-score [6] |

Another fascinating use of sentiment analysis lies in detecting patient activation - those emotional markers signaling engagement in their own care. Research indicates that sentiment analysis can predict behavioral activation outcomes with an R² of 75.5% in studies involving around 10,000 patients [6]. These findings show how enriched PRO measures can lead to actionable insights for clinicians.

Applications Across Care Settings

One of the strengths of sentiment analysis is its flexibility - it can be applied across different care settings, each with its own priorities and data sources. For example:

Outpatient settings: Sentiment analysis of provider notes has shown that declines in sentiment often align with measurable health deteriorations, allowing for earlier interventions [7].

Telehealth and therapy platforms: Sentiment analysis is used to monitor therapeutic relationships and patient safety in real time. By analyzing more than a million conversations, these platforms can identify treatment outcomes, evaluate key therapy elements, and assess suicide risk [1]. The best part? This happens automatically, without adding to a provider’s documentation workload.

Residential and hospital settings: Sentiment analysis extends to patient feedback and online reviews on platforms like RateMDs or health forums. By examining this unstructured data, administrators can pinpoint unmet patient needs, gaps in social support, and areas for quality improvement [3]. This approach captures patient experiences that structured surveys often miss, offering a fuller picture of care quality.

These examples highlight how sentiment analysis integrates seamlessly into clinical workflows, providing valuable insights across the continuum of care. From outpatient clinics to digital platforms and hospital settings, this technology is reshaping how we understand and respond to patient needs.

Clinical and Operational Applications

Real-Time Clinical Decision Support

AI-driven sentiment analysis is transforming how clinicians respond to high-risk situations, offering real-time alerts based on clinical notes or conversation logs. Instead of waiting for scheduled assessments, providers can act immediately when patterns indicating suicide intent, self-harm, or opioid use disorder (OUD) emerge. These tools are highly precise, with AI models for suicide risk assessment achieving an Area Under the Curve (AUC) of 0.83[1]. For example, in April 2025, the University of Wisconsin–Madison implemented an AI-powered OUD screener that reduced 30-day readmissions - cutting the odds ratio to 0.53 and saving $6,801 per avoided readmission[13].

Natural Language Processing (NLP) further enhances care by identifying shifts in conversations, such as increased negativity or warmth. Advanced systems can even predict which interventions are likely to work best based on prior session data[1]. Sentiment-based digital phenotypes have shown their ability to estimate the likelihood of a patient dropping out within 90 days, achieving an AUC of 0.81[15]. These insights allow clinicians to adjust their strategies to meet individual needs more effectively.

Personalizing Treatment Plans

Sentiment analysis goes beyond real-time alerts, offering a deeper understanding of patient experiences to refine treatment plans. By capturing the nuances of emotional and physical symptoms, clinicians can develop more holistic approaches to care. As Nature highlights:

"Generative AI supports bottom-up, narrative-based approaches that process language in a flexible and context-aware way... making PROs a true lever for more personalised, meaningful, and inclusive care"[2].

This approach helps clinicians see how symptoms like emotional numbness and physical fatigue interact in daily life, rather than relying solely on isolated metrics.

AI tools can also predict changes in depressive symptoms weeks in advance by analyzing brief written responses, which take less than 10 minutes to collect and cost under $1 for entire datasets[5]. NLP pinpoints moments of heightened emotional distress, or "hotspots", during therapy sessions, enabling clinicians to focus on the most critical aspects of treatment[6]. Additionally, AI models fine-tuned to detect cognitive distortions or negative symptoms support targeted cognitive-behavioral interventions. Tools like ambient dictation systems can automatically summarize therapy sessions, highlighting subtle symptoms that might otherwise be missed, aiding in more precise treatment planning[6][4].

Program-Level Quality Improvement

Sentiment analysis also plays a crucial role at the program level, helping organizations monitor performance and allocate resources more effectively. By analyzing aggregated sentiment scores from clinical narratives - such as therapist notes and discharge summaries - administrators can uncover patterns linked to better outcomes, including improved treatment adherence and lower readmission rates[11].

Real-time sentiment dashboards provide operational teams with instant insights into emotional shifts during therapy sessions, allowing them to prioritize patients in acute distress[9]. These tools can categorize discussions by risk level, such as depression, anxiety, or suicidal ideation, automating triage processes and delivering timely support to high-risk individuals[16]. In October 2025, researchers from the University of Sargodha and King Faisal University used the RoBERTa-Large model to analyze student mental health, achieving a 97% accuracy rate in classifying user-generated content into seven mental health categories[16].

Sentiment analysis also enhances service quality monitoring. By examining unstructured feedback from public platforms and internal reviews, organizations can identify gaps in care and areas for improvement[3]. These operational advancements complement clinical tools and personalized treatment strategies, creating a unified approach to behavioral health care. With mental health conditions projected to impose a $6 trillion economic burden annually by 2030[10], these AI-driven efficiencies are becoming essential for sustainable care delivery.

Implementing Sentiment Analysis in Behavioral Health Systems

Technical and Workflow Requirements

To integrate sentiment analysis into behavioral health workflows, the first step is selecting the right model. Options include lexicon-based models (relying on predefined dictionaries), machine learning models (supervised or unsupervised), and deep learning models (such as neural networks) [17][14]. The decision depends on factors like the volume and complexity of the data and the resources available. These systems must handle diverse data streams, including EHR notes, therapy session transcripts, sensor data, and consented social media content [18][19].

Real-time dashboards play a key role by tracking emotional changes as they happen, allowing clinicians to respond quickly [9]. However, a human-in-the-loop approach remains critical to ensure that AI supports, rather than replaces, clinical expertise. Akshat Santhana Gopalan highlights this balance:

"NLP tools should enhance, not replace, clinical judgment, and therapists should maintain authority over the way they understand and respond to the emotional information given" [9].

It's also essential to regularly audit these systems for bias. Training data that fails to represent diverse mental health populations can lead to skewed or unfair outcomes [17][19]. For high-risk scenarios, such as detecting suicidal ideation, protocols must include clear escalation paths to human providers. However, current AI models for such tasks often achieve precision and recall rates below 80% [18].

Once the technical foundation is in place, the next focus shifts to ethical considerations and regulatory compliance.

Ethics and Compliance

To meet HIPAA requirements, organizations must establish BAAs with AI vendors, adhere to the Minimum Necessary Standard for PHI, and prioritize the use of de-identified data whenever possible [20][22]. Psychotherapy notes, which receive extra protection under HIPAA, typically require explicit patient authorization for any use beyond treatment [22].

Data transmission must occur through encrypted, EHR-integrated channels [21]. While some medical data can be shared for treatment without written consent, obtaining informed consent for sentiment analysis is considered a best practice. As Core Solutions points out:

"For behavioral health providers, securing sensitive client data is not only a legal requirement but also an ethical obligation" [19].

With compliance protocols in place, platforms like Opus Behavioral Health EHR offer a streamlined approach to integrating sentiment analysis.

Using Opus Behavioral Health EHR

Opus Behavioral Health EHR stands out as an example of effective sentiment analysis integration, building on both technical and ethical frameworks. Its Copilot AI Documentation Assistant automates the capture and organization of clinical notes, reducing charting time by 40% while improving note quality for more accurate sentiment analysis [24].

The Opus Outcome Measurement Tool (OMT) provides a robust system for tracking patient engagement metrics. It includes a customizable library of over 100 assessment tools designed to monitor moods, behaviors, and progress trends [24][23]. Real-time dashboards give clinicians a clear view of patient patterns and trends, offering data-driven insights into their overall progress [24]. Andrea Horwitz, Clinical Director, shares a real-world example of its impact:

"Reviewing weekly treatment results shows me what is really happening with my clients, even if they are not able to express it in session. I was able to review the results of my client's assessments to see that his anxiety was out of range... We were able to work together to prevent a relapse, a crisis, and potential tragedy due to the Opus Patient Engagement system" [23].

Opus also supports remote patient engagement, enabling clinicians to send secure links for patients to record their experiences in their own words. These results are delivered directly to the EHR, maintaining high security standards that align with Joint Commission (JCAHO) recommendations for patient feedback models [23]. This feature allows for continuous sentiment tracking between sessions, offering clinicians valuable insights while ensuring patient data remains protected.

Future Directions in AI-Driven Outcome Analytics

As AI continues to evolve, its role in refining outcome analytics for behavioral health is poised to expand, building on the progress already achieved.

Multimodal Sentiment Analysis

The next wave of sentiment analysis goes beyond analyzing text by incorporating multiple data streams like facial expressions, speech patterns, and physiological signals such as EEG, ECG, and heart rate. This approach provides a deeper understanding of patient emotions by bridging the gap between what people say and how they express themselves nonverbally [25].

Advanced multi-task models are designed to reconcile inconsistencies between these emotional signals across different channels [25]. For example, research has shown that combining acoustic features with linguistic analysis enhances accuracy. One study involving 269 patients demonstrated that integrating these data types significantly improved the prediction of 90-day treatment retention, achieving a BERT model AUC of 0.81 [15]. These multimodal techniques not only deepen clinical insights but also enable more tailored treatment plans.

Integrated Outcome Models

Future AI models aim to combine unstructured patient narratives with clinical metrics, social determinants of health, and patterns of healthcare utilization [2]. This approach moves away from oversimplifying complex patient experiences into a single numerical score, instead embracing their full complexity.

Emerging platforms are blending large language models with traditional psychometric tools like Item Response Theory. This hybrid approach maintains scientific rigor while capturing the subtleties of patient experiences [2]. Generative AI is also being leveraged to support narrative-based methods, allowing for a richer understanding of patient nuances alongside standard metrics. Between 2020 and 2022, over half (53.9%) of NLP studies focused on mental health interventions, highlighting the rapid growth of this field [6].

These integrated models pave the way for systems that can easily adapt to new data sources while scaling to meet diverse clinical needs.

Expanding Software Capabilities

Behavioral health platforms are now incorporating tools like ambient dictation, which automatically summarizes clinical notes. This reduces administrative burdens while also capturing sentiment data for further analysis [4].

For example, Opus Behavioral Health EHR includes features like automated risk stratification, real-time alerts, and multimodal data integration to drive quality improvement. These tools generate precise risk scores and enable dynamic treatment adjustments, directly enhancing patient care. Additionally, the adoption of open API architectures ensures that sentiment insights can seamlessly flow across different care settings, fostering a connected ecosystem for measuring and improving patient outcomes [4].

Conclusion: AI Sentiment Analysis in Behavioral Health

AI sentiment analysis is reshaping behavioral health by uncovering patient emotions from clinical data. These tools analyze emotional tones in clinical notes, patient narratives, and brief written responses to predict symptom changes weeks in advance and flag at-risk patients before crises occur. For example, a 2024 study with 467 participants showed that ChatGPT 3.5 and 4.0 matched human accuracy in predicting changes in depression scores at three-week follow-ups - all based on under 10 minutes of patient writing [5].

The practical benefits are just as striking. AI-powered screeners embedded in EHR systems offer real-time support by automatically identifying patients who need specialized care. At the University of Wisconsin–Madison, an AI-driven screener cut 30-day readmission rates by nearly half (odds ratio: 0.53), saving $6,801 per avoided readmission [13]. Additionally, these tools help track treatment fidelity, ensuring providers stick to evidence-based methods like Motivational Interviewing and Cognitive Behavioral Therapy [6].

These systems go beyond rigid scoring to capture the nuances of patient emotions. Language-based AI models, for instance, have achieved an AUC of 0.81 in predicting treatment dropout risk - outperforming standard intake assessments - all for less than 0.1 cent per individual analyzed [15] [5]. This level of insight enables more integrated and responsive digital solutions.

Platforms such as Opus Behavioral Health EHR are leveraging these advancements by embedding AI capabilities directly into clinical workflows. Features like automated risk stratification, real-time alerts, and multimodal data analysis help treatment centers pinpoint patients who are struggling, enabling proactive outreach and on-the-fly treatment adjustments based on AI-driven insights [4].

With depression and anxiety rates surging by 25% post-pandemic [16], and neuropsychiatric disorders projected to cost $6 trillion annually by 2030 [6], the need for scalable, cost-efficient solutions has never been greater. AI sentiment analysis enhances diagnostic precision, customizes care, and reduces administrative strain - all while keeping clinicians at the helm of decision-making.

FAQs

How does AI sentiment analysis help predict patient outcomes in behavioral health?

AI sentiment analysis leverages advanced natural language processing (NLP) to uncover emotional cues in patient-generated text, such as therapy notes, intake forms, or portal messages. By analyzing emotions like sadness, anxiety, or optimism and observing how they evolve over time, it helps clinicians identify patterns that might indicate potential risks - worsening mood disorders, lapses in treatment adherence, or even relapse.

These insights empower healthcare providers to act proactively, whether by tweaking treatment plans or offering timely interventions. For example, tools integrated into platforms like Opus Behavioral Health EHR allow clinicians to effortlessly track emotional trends alongside traditional health metrics, enabling more informed decisions and promoting compassionate, personalized care.

What is AI sentiment analysis, and how is it used in healthcare?

AI sentiment analysis in healthcare leverages natural language processing (NLP) and machine learning to interpret patient feedback - whether written or spoken - and evaluate the emotional tone behind it. By analyzing language, these systems classify sentiments as positive, neutral, or negative, offering clinicians a deeper understanding of patient experiences.

The technology relies on machine learning models for emotion detection and deep neural networks, like transformer-based large language models (LLMs), which excel at grasping context and subtle nuances in communication. These models are often customized with healthcare-specific data, such as clinical notes or patient surveys, to ensure they perform well in medical settings.

When integrated into tools like electronic health records (EHRs), sentiment analysis provides healthcare providers with actionable insights into patient emotions. This can lead to improved communication, better monitoring of behavioral health, and the ability to identify patients who might need extra support, ultimately enhancing treatment outcomes.

How does AI-driven sentiment analysis enhance patient outcomes and treatment quality?

AI-driven sentiment analysis is reshaping how we understand patient-reported outcomes (PROs). By examining free-text responses, it picks up on emotional states like stress, anxiety, or satisfaction - often capturing subtle cues that traditional methods might overlook. This approach gives clinicians a clearer, more consistent view of a patient’s mental health, paving the way for earlier interventions and tailored care strategies.

In behavioral health, tools like Opus Behavioral Health EHR integrate sentiment analysis to monitor patient feedback in real time. For example, when comments suggest rising anxiety levels or signs of disengagement, the system can trigger alerts. This allows clinicians to quickly adjust treatment plans, track adherence more effectively, and make data-informed improvements to care. The result? A more dynamic and responsive approach that boosts both patient outcomes and the overall quality of treatment.