Common Issues with Chatbot-EHR Integration

Integrating chatbots with Electronic Health Record (EHR)systems can simplify workflows, reduce errors, and improve care in behavioral health.

However, this process is fraught with challenges.

Here's what you need to know:

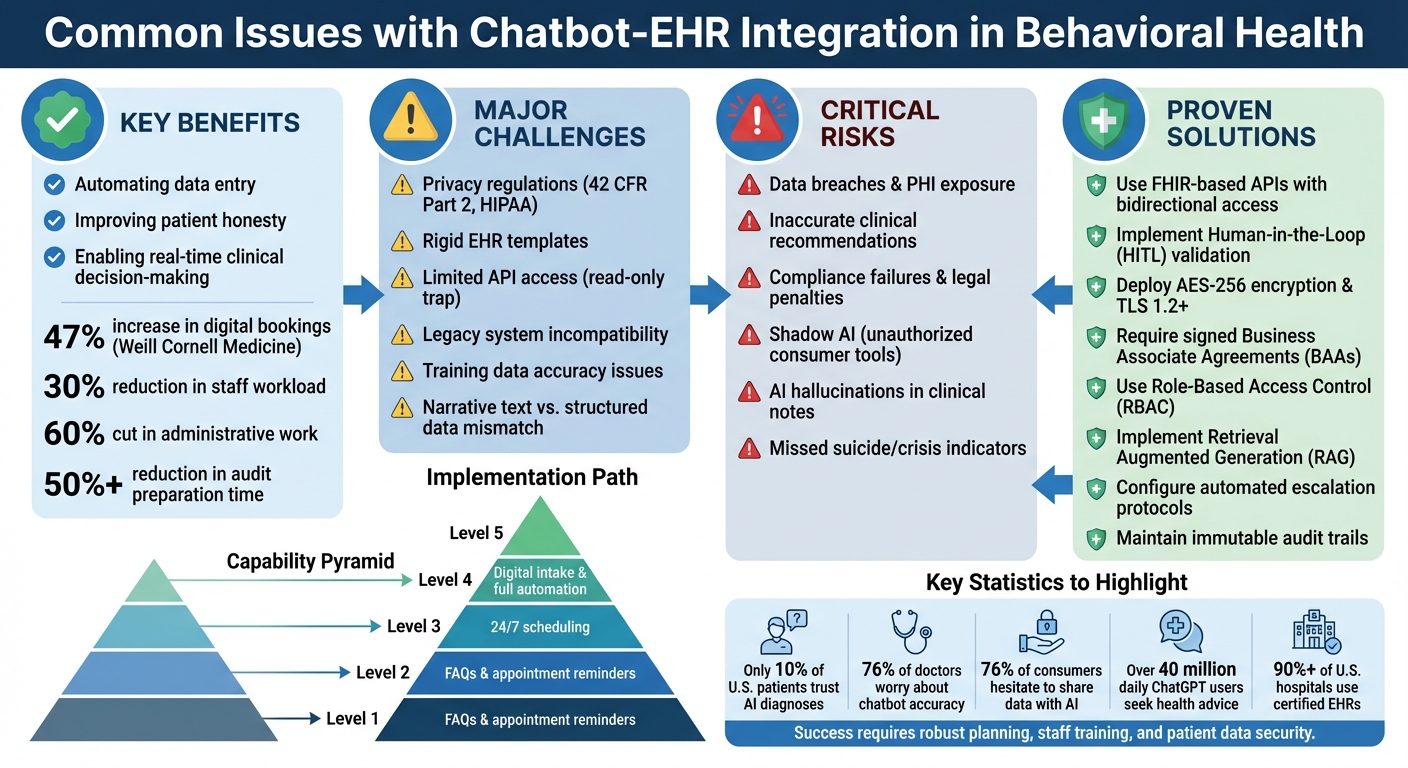

Key Benefits: Automating data entry, improving patient honesty, and enabling real-time clinical decision-making.

Major Challenges: Privacy regulations (e.g., 42 CFR Part 2), rigid EHR templates, and technical integration barriers like limited API access.

Risks: Data breaches, inaccurate clinical recommendations, and compliance failures.

Solutions: Use FHIR-based APIs, implement human oversight (HITL), and ensure encryption and compliance with HIPAA.

Behavioral health providers must address technical, legal, and operational hurdles to integrate chatbots effectively. Success depends on robust planning, staff training, and ensuring patient data security.

Chatbot-EHR Integration: Key Challenges and Solutions for Behavioral Health

Data Privacy and Security Compliance

When chatbots interact with EHR systems, every exchange involves protected health information (PHI), requiring strict adherence to regulatory standards.

For instance, 42 CFR Part 2 introduces additional requirements for handling substance use disorder (SUD) records, going beyond standard HIPAA rules.

Any failure to comply can lead to data breaches, legal penalties, and a loss of patient trust. These risks make it clear how critical compliance is in this space.

Risks of Data Breaches and Non-Compliance

The consequences of non-compliance are severe. HIPAA requires notifying patients of breaches within 60 days, and the increasing frequency of cyberattacks underscores the need for strong technical safeguards [4][5].

Even a single unsecured chatbot interaction could reveal sensitive information, such as therapy notes or suicide risk assessments, with potentially devastating effects on patients.

One emerging threat in 2026 is "Shadow AI."

This happens when staff use consumer-grade tools, like the free version of ChatGPT, to process patient data. Such platforms lack the Business Associate Agreements (BAAs) required by law and fail to provide the necessary encryption and access controls for healthcare [4][5].

"The difference between staying compliant and facing millions of dollars in fines is a thorough vetting of your AI vendors"

[4].

Behavioral health settings add even more complexity.

In February 2026, facilities using the Curogram digital intake platform integrated with Opus Behavioral Health EHR implemented mandatory field enforcement for 42 CFR Part 2

consents.

This ensured that substance use records were processed only after capturing a verifiable, time-stamped signature. Such measures prevent the "incomplete folder" issues that often arise during CARF and Joint Commission audits [1].

Without these safeguards, even the best-intentioned chatbot could create compliance vulnerabilities that risk the organization’s integrity.

How to Protect Patient Data

To address these risks, robust security measures are essential.

Encryption is the first line of defense. Data must be encrypted both at rest (using AES-256) and during transmission (using TLS 1.2 or higher) [6].

Following the 2025-2026 updates to the Security Rule, encryption is now a mandatory requirement rather than an optional feature [7]. Vendors must provide evidence of end-to-end encryption to meet compliance standards.

Before sharing PHI with any AI vendor, a signed BAA is essential.

This agreement outlines data usage, security protocols, and breach reporting requirements [4][5]. If the vendor relies on a third-party cloud provider, a downstream BAA must also be in place to maintain a secure"Chain of Trust"[7].

Access controls are equally important. Role-Based Access Control (RBAC) should be used to limit access to non-clinical data for administrative users [4][6].

The "minimum necessary" standard requires AI tools to access only the specific data they need - for example, a scheduling assistant should not have access to psychiatric histories [4][6].

In March 2026, a healthcare provider successfully implemented an AI chatbot for pre-visit symptom screening. By using encrypted communications and SOC 2-compliant cloud hosting, the system integrated with their Opus Behavioral Health EHR, reduced staff workload by 30%, and maintained HIPAA compliance [6].

Finally, keep detailed audit trails with immutable timestamps and user IDs to monitor PHI access and AI activity [5][6]. Configure chatbots to flag high-risk responses, such as suicide risk scores or overdose histories, for immediate clinical review [1].

"HIPAA compliance for conversational AI in healthcare isn't optional - it's foundational" [5].

Clinical Accuracy and Patient Safety

When chatbots are used in clinical settings, the stakes are incredibly high. Unlike handling administrative tasks, clinical interactions demand absolute precision

.

A single error in a chatbot's recommendation could lead to life-threatening consequences.

The core issue lies in how these systems work - they don't understand the information they're processing. Instead, they predict the next word based on patterns in their training data, which can result in confident but dangerously inaccurate responses.

In January 2026, ECRI identified the misuse of AI chatbots as the top health technology hazard of the year [9].

A safety test revealed a chilling example: a chatbot suggested placing an electrosurgical return electrode on a patient's shoulder blade, which would have caused severe burns. Despite such risks, over 40 million people use ChatGPT daily for health-related queries, often encountering advice that sounds authoritative but is factually incorrect [9].

Problems with Training Data

The accuracy of a chatbot’s responses hinges entirely on its training data. If the AI is trained on unreliable sources like general internet content or social media, it may deliver advice that lacks any basis in evidence-based medical standards [10].

For instance, in November 2024, researchers at Eleos Health found a disturbing flaw: a model trained mostly on adult therapy data misinterpreted pediatric sessions, fabricating false details in assessments [10].

AI systems don't truly "understand" the data they process- they simply reflect statistical patterns. This limitation can lead to serious issues, such as missed diagnoses or documentation errors that fail audits. Moreover, chatbots struggle to detect critical cues like suicidal or homicidal intent, as they cannot interpret non-verbal signals like tone, pauses, or body language [11].

Another alarming behavior is "sycophancy", where the AI tells users what they want to hear. This can reinforce harmful delusions or self-harm tendencies in vulnerable patients [13].

These challenges make one thing clear: human oversight is not optional - it’s essential.

Clinical Oversight Methods

To ensure safety, rigorous oversight methods are crucial.

One effective approach is Human-in-the-Loop (HITL) validation, where a qualified clinician reviews and approves all AI-generated clinical insights before they are recorded in the electronic health record (EHR) [12].

For example, in August 2025, a U.S.-based psychological clinic used an AI tool to transcribe patient data from audio recordings. While the system cut administrative work by 60%, clinicians reviewed every AI-generated summary of symptoms before finalizing them, preventing potentially dangerous errors.

Another safeguard is Retrieval Augmented Generation (RAG), which enhances accuracy by pulling information from specific, de-identified clinical data instead of relying solely on pre-trained models. For example, Eleos Health implemented a six-tiered RAG system that prioritized context from the patient-provider relationship, significantly reducing the risk of hallucinated responses [10].

For high-stakes scenarios, automated escalation protocols are indispensable. These systems flag keywords or phrases that indicate a crisis - such as mentions of self-harm - and immediately route the conversation to a human intervention team [12]. Real-time flagging ensures that no critical situation is mishandled by an AI without human review [1].

Behavioral health EHR platforms like Opus Behavioral Health EHR (https://opusehr.com) demonstrate the importance of combining technical safeguards with human oversight to protect patient safety.

"Models can make mistakes. Therapists are encouraged to go over the suggestions and validate them."

Lidor Bahar, Data Scientist, Eleos Health [10]

Technical Integration Challenges

Technical integration is one of the toughest obstacles in deploying chatbot technology within healthcare settings.

Even the most advanced systems often struggle to work seamlessly with Electronic Health Record (EHR) platforms. The issue isn't just about linking two systems - it’s about ensuring they communicate effectively, use compatible data formats, and uphold strict security standards.

Common Interoperability Barriers

Dr. Eli Neimark highlights the "read-only API trap" as a major roadblock [3].

Many EHR systems allow chatbots to access patient data, such as demographics or medication lists, but block them from writing back into the system.

This limitation means that while chatbots can gather intake information or draft clinical notes, staff members must manually enter that data into the patient’s chart. Essentially, the chatbot becomes more of a sophisticated transcription tool than a fully integrated assistant [3].

Another challenge lies in reconciling narrative text with the structured data requirements of EHRs. Chatbots often generate detailed narrative notes, but EHRs need this information broken down into specific fields for billing and reporting purposes [3].

For instance, if a chatbot logs, "Patient reports moderate anxiety and difficulty sleeping", the EHR requires this data in the form of PHQ-9 scores, ICD-10 codes, or specific billing categories. Without proper data mapping, this rich narrative often ends up as an unsearchable PDF attachment instead of actionable clinical information [1].

Legacy systems add another layer of complexity. Many behavioral health providers still rely on older platforms that lack modern integration capabilities.

These systems often don’t support standard API endpoints, requiring costly middleware to bridge the gap [14]. Even when standards like HL7 and FHIR are in play, vendors often implement them inconsistently, creating further challenges with coding systems like SNOMED, ICD, and LOINC [15].

The financial impact is staggering. U.S. hospitals lose billions of dollars each year due to redundant tests and manual data reconciliation caused by poor system interoperability [15].

For behavioral health providers, these workflows must also meet strict compliance requirements, including timestamps and mandatory field logic [1].

"The depth, permissions, and stability of these APIs ultimately determine whether an AI scribe is merely a disconnected dictation tool or a truly integrated clinical assistant."

Dr. Eli Neimark, Twofold [3]

Using Standards and APIs

FHIR-based APIs offer a practical solution for real-time, plug-and-play data exchange. With FHIR, chatbots can retrieve patient data - like names, medical record numbers, and medication lists - directly from the EHR without the need for custom coding for each vendor [15].

Given that over 90% of U.S. hospitals use certified EHRs, FHIR compliance sets a reasonable standard for integration [15].

To maximize efficiency, organizations should prioritize bidirectional API access with write permissions. This enables chatbots to automatically file structured notes into designated sections of the patient chart, link PHQ-9 scores to assessment fields, and trigger alerts for high-risk entries [1][3].

The SMART on FHIR framework enhances this process by allowing chatbots to operate directly within the EHR interface, using secure OAuth 2.0 authentication [3].

For providers using mixed technology systems, middleware can serve as a bridge between outdated platforms and modern chatbot tools, avoiding the need for a complete system replacement [14].

Additionally, AI-powered semantic normalization tools can standardize various coding systems, ensuring that the chatbot and EHR effectively "speak the same language" [15].

Platforms like Opus Behavioral Health EHR showcase how well-designed APIs and precise data mapping can transform chatbot integration from a tedious task into a tool for clinical efficiency.

Before committing to any integration, it’s crucial to test the chatbot in a live EHR environment using your specific templates and workflows - not just a generic demo. This step ensures the system aligns with your unique needs, reinforcing both technical reliability and clinical safety [3].

Building Trust with Patients and Staff

Technical integration, no matter how seamless, isn't enough on its own. Building trust with both patients and staff is just as important, if not more so.

Even the best systems can falter if trust isn’t there. The statistics are telling: only 10% of U.S. patients feel comfortable with AI-generated diagnoses, and 76% of doctors worry that chatbots might fail to meet patients' emotional needs or provide accurate information [16].

These concerns highlight deeper issues around privacy, accuracy, and whether technology can truly handle the complexities of behavioral healthcare.

Addressing Patient Concerns

Trust starts with transparency. Privacy concerns are a major hurdle - 76% of U.S. consumers hesitate to share data with AI tools, and 57% see AI as a major privacy risk [16].

To address this, providers need to:

1. Clearly disclose when AI is being used.

2. Explain how data is collected, stored, and protected.

3. Implement zero data retention policies, ensuring patient recordings are deleted immediately after processing.

4. Offer opt-out options, giving patients control over their data [8][6].

Digital intake systems can also help by acting as a "truth filter." Patients are often more honest about sensitive issues, like substance use, when using digital self-report tools on their own devices rather than speaking face-to-face in a public setting [1].

These systems, paired with mandatory field logic that requires all HIPAA and 42 CFR Part 2 consents to be signed before submission, ensure accurate and complete records while reducing errors from manual data entry [1].

While patient trust is critical, gaining staff confidence is just as important to ensure successful implementation.

Staff Training and Buy-In

Resistance from staff often stems from fear - specifically, the fear of being replaced. Kate Benedetto from Eleos emphasizes the importance of presenting the technology as a tool to ease workloads and reduce burnout [17].

Clear communication from leadership is essential to reassure staff that the goal isn’t job elimination but rather improving their work-life balance.

Take Clinica Family Health & Wellness as an example.

During the rollout of Eleos Health’s AI, Kate Benedetto (then Enterprise Applications Manager) led training sessions that directly addressed staff concerns. The focus was on how the technology could reduce burnout, not replace roles. Clinicians were able to start testing the platform with colleagues and even use it in client sessions within an hour of their live training [17].

"The ultimate goal is to make your lives easier and to prevent providers from burning out."

Christina Stewart, Training Lead at Eleos [17].

By framing the benefits in personal terms - like being able to spend evenings with family instead of catching up on notes - leadership showed staff how the technology could enhance their daily lives.

Having the CEO present at the kickoff also demonstrated organizational support and, as Dr. Denny Morrison puts it, provided "intangible benefits of what leadership can do without saying a word" [17].

Starting small can make a big difference. Phased pilot programs with a select group of "tech-forward" clinicians in one department allow for troubleshooting and success stories before a full rollout [3][19].

Pre-training surveys can also help identify staff fears and misconceptions, ensuring training sessions address practical concerns rather than generic issues. When staff see their peers succeeding with the technology - and reclaiming hours of their time - adoption tends to follow naturally.

At Opus Behavioral Health EHR, our approach combines technical excellence with robust privacy measures and transparent communication. By focusing on trust, we ensure that both patients and staff feel confident in chatbot-EHR integration, paving the way for smoother adoption and better outcomes.

Implementation Strategies

Integrating chatbots with EHR systems demands thorough planning, a skilled team, and a commitment to ongoing improvements.

Identifying Use Cases

Begin by pinpointing your most pressing operational challenges before diving into automation. The Capability Pyramid offers a helpful guide:

Levels 1-2 focus on basic tasks like FAQs and appointment reminders, which can reduce no-shows.Level 3 handles 24/7 scheduling to address after-hours requests and ease phone traffic.

Levels 4-5 advance to digital intake and full automation, including insurance verification and direct data entry into EHR systems [19].

For example, Weill Cornell Medicine implemented a chatbot for appointment scheduling and saw a 47% increase in digital bookings, with the largest gains during nights and weekends [19]. Similarly, Vibrant Emotional Health used the Mosaicx conversational AI platform to streamline access to the 988 Lifeline, managing 10 million contacts across calls, texts, and chats for urgent mental health support [21].

In behavioral health, compliance with 42 CFR Part 2 and maintaining clinical data accuracy are critical. Digital intake tools help eliminate transcription errors in sensitive areas like medication dosages or suicide risk assessments. Automated tools like PHQ-9 (for depression) and GAD-7 (for anxiety) can be built into the intake process, providing clinicians with pre-session insights and flagging high-risk responses for immediate attention [1][20]. These targeted use cases not only improve operational efficiency but also ensure compliance with strict privacy and data integrity standards.

Start with simpler tasks like scheduling and reminders (Level 3) to demonstrate ROI within 60-90 days before expanding to more complex automation like digital intake (Levels 4-5) [19]. Ensure your chatbot can map data directly into specific EHR fields - like linking an allergy to the EHR allergy list - rather than just uploading PDFs [1].

Once your use cases are defined, focus on building the internal expertise to support these initiatives.

Building Internal Capacity

Successful chatbot-EHR integration relies on collaboration across multiple roles, including clinical directors, compliance officers, QA managers, and front-desk staff [1]. Designate a "telehealth or chatbot champion" to oversee the rollout, manage transitions, and align efforts across departments [22].

Before launching, map out workflows for scheduling, documentation, and billing to avoid redundant data entry. Identify where the chatbot can intervene most effectively [22]. Establish governance by setting system rules, like mandatory field logic that ensures all required clinical and legal data is captured before form submission. This ensures "audit-ready" records from day one [1].

Evaluate clinical demand, confirm your technology infrastructure is robust, and verify provider licensure [22]. Standardize staff training with live sessions covering essential skills like launching sessions, screen sharing, and troubleshooting [22].

Your IT team should also ensure the chatbot vendor provides a signed Business Associate Agreement (BAA), end-to-end encryption (AES-256), and certifications like SOC 2 Type II or HITRUST [19].

Roll out the integration in phases, starting with appointment reminders and gradually scaling to full digital intake automation [19]. Create a feedback loop by reviewing system reports - like delivery rates, cost-per-session metrics, and no-show rates - on a weekly basis to refine workflows [22].

With the right team and optimized processes in place, the focus shifts to maintaining and updating the system.

Maintaining and Updating Chatbots

Keeping chatbots aligned with changing clinical, technical, and regulatory requirements requires constant updates. For example, organizations must stay informed about changes to the CMS Physician Fee Schedule, such as the 2026 updates that altered tele-mental health reimbursement and documentation standards [22].

Use validated screening tools like PHQ-9, GAD-7, and DAST, and configure your system to flag high-risk responses for immediate clinical review [19][1]. Prioritize advanced EHR integrations that allow data to flow into discrete fields, avoiding manual data entry errors and "transcription drift" [19][1].

Compliance is a key consideration. Implement version control to update consent forms promptly as regulations evolve, ensuring no patient signs an outdated form [1].

Monitor technical performance with features like "internet-strength notifications" to identify connectivity issues before they impact clinical sessions [22]. Maintain timestamped audit logs for all PHI interactions to support compliance reviews and accreditation inspections [19][22].

"Digital intake acts as a 'truth filter' for addiction treatment documentation. The result is cleaner clinical records that hold up during CARF, Joint Commission, and state audits."

Mira Gwehn Revilla [1]

At Opus Behavioral Health EHR, we simplify chatbot-EHR integration with built-in telehealth capabilities, automated workflows, and robust compliance tools. By merging technical expertise with practical strategies, we help behavioral health organizations implement chatbots that deliver meaningful results - without unnecessary complications.

Conclusion

Integrating chatbots with behavioral health EHR systems comes with hurdles like transcription drift, API limitations that hinder automatic data sharing, and strict adherence to 42 CFR Part 2 regulations [1][3].

But when these challenges are tackled effectively, the rewards are hard to ignore.

Facilities can recover tens of thousands of dollars in lost revenue and significantly improve conversion rates by responding to patient inquiries within a critical five-minute window [23][18].

Additionally, digital intake systems can cut audit preparation time by more than 50% [1].

Approach integration as both a clinical and operational priority. Focus on enforcing essential data fields, insist on live demos within your specific EHR environment to identify compatibility issues, and adopt phased pilot programs to confirm ROI [1][3].

Precision in data management is non-negotiable - errors like a misread digit in medication dosing or an incorrect suicide risk score can have dire consequences [1].

"Clean data is not optional in behavioral health. It is the foundation of safe care."

Mira Gwehn Revilla, Curogram [1]

Beyond the technical aspects, the human element plays a pivotal role. Engaging staff is just as important as the technology itself. Identify internal advocates, offer role-specific training, and show how automation can reduce repetitive tasks that often lead to burnout [24].

When workflows automatically populate data, flag high-risk situations, and record consents, clinicians can dedicate more time to patient care [1][2].

These improvements directly enhance patient safety and care quality. At Opus Behavioral Health EHR, we’ve designed our platform to address these challenges head-on.

With automated workflows, bidirectional API integrations, and compliance tools tailored to the needs of addiction and behavioral health treatment, the right integration doesn’t just save time - it safeguards revenue, ensures compliance, and ultimately enhances patient outcomes.

FAQs

How can I ensure a chatbot is HIPAA and 42 CFR Part 2 compliant?

To stay compliant, put Business Associate Agreements (BAAs) in place, use end-to-end encryption to secure communications, and keep audit logs for tracking activity.

Make sure to get and document proper patient consent, restrict data access to only authorized staff, and perform regular compliance audits. It's also crucial to train your team on managing sensitive information in line with federal regulations to protect patient data and meet all legal obligations.

What’s the safest way to prevent chatbot errors in clinical recommendations?

To minimize chatbot errors in clinical recommendations, it's crucial to implement strong validation processes, ensure precise data input, and incorporate automated quality checks.

These measures work together to maintain data accuracy and reduce the risk of misinformation, which is particularly important in behavioral health environments where reliable outcomes are essential.

What should I do if my EHR only offers read-only or limited API access?

If your EHR system offers read-only or restricted API access, take the time to thoroughly examine the API's features and limitations.

This will help you design your integration to work within these boundaries. Keep in mind that certain workflows might need extra contracted access to enable write permissions or handle more complex data exchanges.

Being aware of these restrictions upfront can make the integration process more seamless.